Forget everything you thought you knew about voice assistants. That clunky, robotic voice that takes five seconds to look up the weather? It’s a dinosaur. In a span of just 24 hours, OpenAI & Google paraded out their new AI models, GPT-4o & Project Astra, and they’re not just an upgrade- they’re a complete paradigm shift. We’ve officially entered the era of real-time, emotionally aware, voice-first AI that feels less like a tool & more like a collaborator. This isn’t science fiction anymore. Well, mostly.

GPT-4o: Your AI Just Got a Personality

OpenAI’s “Spring Update” event wasn’t about some boring new enterprise feature. It was a live demo of GPT-4o (“o” for “omni”), and frankly, it was stunning. The key takeaway? Speed & emotion. This isn’t just another language model; it’s a single, unified model that natively understands text, audio, & vision. Why does that matter? Because the old way was a janky pipeline: a speech-to-text model transcribed your words, then an LLM like GPT-4 figured out a response, & finally, a text-to-speech model read it back. It was slow & lost all nuance.

This old method led to painful delays. The previous Voice Mode on GPT-4 had a latency of around 5.4 seconds. Who has time for that? GPT-4o smashes that record. It responds to audio in as little as 232 milliseconds, with an average of 320 milliseconds. That’s faster than a human’s typical response time in a conversation. It can be interrupted, it can understand your tone, & it can even laugh or sing. It feels present, not like it’s processing a query on a server farm somewhere in Virginia.

So, What Can It Actually Do?

The demos were designed to wow, & they did. We saw GPT-4o acting as a real-time translator between two people speaking different languages, with zero awkward pauses. We watched it look at a math problem on a piece of paper through a phone’s camera & guide a student through solving it- not by giving the answer, but by offering hints & encouragement. It changed its tone from playful to dramatic on command. It’s the kind of stuff you’d expect from the movie Her, & it’s rolling out now.

And here’s the kicker for developers & businesses: the GPT-4o API is a game-changer. It’s 2x faster & 50% cheaper than the already impressive GPT-4 Turbo. This move makes building sophisticated, real-time voice & vision applications accessible to a much wider range of creators. The free tier of ChatGPT is also getting access to GPT-4o (with usage limits, of course), bringing this power to millions of users.

Project Astra: Google’s Universal AI Agent

Not to be outdone, Google took the stage at its I/O conference the very next day to show off Project Astra. If GPT-4o is the ultimate conversationalist, Astra is Google’s vision for the ultimate AI agent- an AI that remembers what it sees & hears to build a contextual understanding of your world.

The demo video, presented as a “continuous one-take,” showed a user pointing their phone camera at various objects. The AI, speaking in a natural, responsive voice, correctly identified a part of a speaker, explained a block of code on a monitor, & even remembered where the user left their glasses a minute earlier by recalling the visual stream. It’s designed to be a “multimodal” model from the ground up, just like GPT-4o, built on Google’s Gemini family of models.

Of course, the slick demo drew some skepticism. Was it real-time? Google later clarified that while the video was shortened for length, the sequence of agent interactions happened as shown. The goal of Project Astra isn’t just to be a reactive chatbot; it’s to be a proactive assistant that can genuinely help you navigate your environment. Imagine an AI that can help you find your keys, remind you of a person’s name at a conference by looking at them, or give you step-by-step repair instructions while watching you work. That’s the future Google is building toward.

Why This Is a Bigger Deal Than You Think

This isn’t just about cooler chatbots. This is a fundamental shift in human-computer interaction. For decades, we’ve adapted to the computer’s language: clicks, taps, & keywords. Now, for the first time, computers are adapting to ours- complete with tone, interruptions, & visual context.

Real-World Applications Are Already Here

- Education: OpenAI is already partnering with Khan Academy to provide free tutoring powered by GPT-4o. A patient, knowledgeable, and always-available tutor is no longer a luxury.

- Accessibility: Imagine an app that can describe a person’s surroundings in rich, conversational detail for someone who is blind or has low vision. This technology makes that a reality.

- Work & Productivity: The new ChatGPT desktop app for macOS (Windows version coming later) means the AI can see your screen. It can help you analyze charts, write code, or summarize long documents with a simple voice command. Your workflow is about to get a serious AI co-pilot.

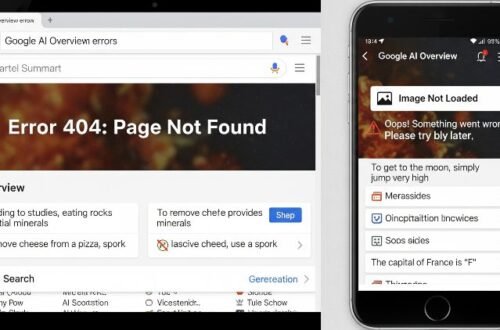

The Inevitable Catch: Ethics & The “Sky” Fiasco

It’s not all sunshine & sci-fi dreams. The more human-like these AIs become, the thornier the ethical questions get. OpenAI learned this the hard way almost immediately. One of the new voice options, named “Sky,” sounded eerily similar to Scarlett Johansson’s AI character in *Her*. Johansson released a statement claiming she had declined OpenAI’s offer to voice the system, leading to a massive public backlash. OpenAI quickly pulled the voice, insisting the similarity was unintentional.

Whoops. This incident is a perfect, flashing-red-light warning about the road ahead. Creating deeply personal & persuasive AI companions raises huge questions:

- Deception & Manipulation: How do we prevent these tools from being used to create hyper-realistic scams or to emotionally manipulate users?

- Privacy: Project Astra’s vision requires it to see & hear everything you do. Where is this data stored? How is it secured? Who has access? The privacy policies for these new tools will need intense scrutiny.

- Likeness & Identity: The “Sky” incident proved we need clear rules around AI voices & likenesses. You can’t just “create” a personality that sounds like a famous person without their consent. That’s just basic.

The Race Is On, Ready or Not

GPT-4o & Project Astra have fired the starting pistol on the next great tech race. This is the new battleground, and it’s not about who has the most parameters or the biggest dataset. It’s about who can create the most useful, seamless, & trustworthy AI companion. The slow, boring era of AI is over. The voice-first, always-on era has begun.

The tech is incredible, but it’s moving faster than our social & legal frameworks can keep up. As users, developers, & citizens, we have to be both excited by the possibilities & vigilant about the risks. The real challenge won’t be building the technology- it’ll be learning how to live with it. So go ahead, start talking to your phone. It’s finally ready to listen.