Microsoft just announced its grand new vision for the PC, and it’s called Recall. The pitch is simple & seductive: an AI-powered feature that gives your computer a photographic memory. It remembers everything you see & do, creating a perfectly searchable timeline of your digital life. Found a cool recipe on a random website last Tuesday? Can’t remember the name of that PDF your boss sent you? Just ask your PC. It’s supposed to be the ultimate productivity hack, a genius move to integrate AI deep into the OS. But as the details trickled out, the tech community had a collective spit-take. This “genius” feature looks an awful lot like a privacy & security disaster of epic proportions, a pre-packaged surveillance tool you install yourself.

What is This “Recall” Thing, Anyway?

Recall is the flagship feature of Microsoft’s new “Copilot+ PCs”. These are next-gen laptops equipped with powerful Neural Processing Units (NPUs) designed to handle AI tasks locally, on your device, instead of in the cloud. The idea is to make AI faster & more private. For Recall, this means the AI is constantly taking screenshots of your active screen every few seconds. Yeah, you read that right. Every few seconds.

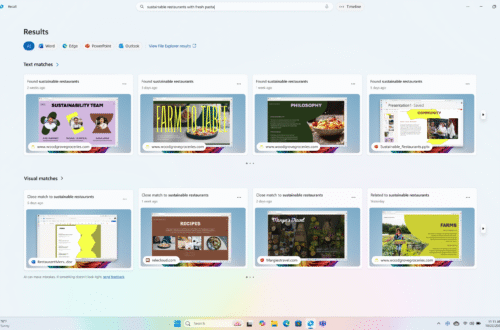

It then uses AI to analyze these pics for text & images, making them searchable. It captures everything: websites, documents, emails, chat messages in Signal or WhatsApp, passwords you type in visible fields, your online banking info, that weird thing you Googled at 3 AM. All of it gets stored in a database on your computer’s hard drive. Microsoft says this database can hold months of data, with a default allocation of 25GB but going up to 150GB. The promise is that you can scroll back in time or search with natural language like “find that blue dress I was looking at on Amazon” & Recall will pull up the exact moment it was on your screen.

A Hacker’s Wet Dream, Courtesy of Microsoft

From a security perspective, this is just mind-bogglingly reckless. Microsoft has essentially built a tool that does a hacker’s job for them. Think about how malware typically works. An attacker deploys an “infostealer” trojan. This malware has to sift through your system, looking for browser cookies, saved passwords, crypto wallets, & sensitive documents. It’s a bit of work.

With Recall, that whole process is streamlined. If an attacker gains access to your machine – and people get phished or infected with malware all the time – they don’t need to hunt for your secrets. Microsoft has already gathered them all into one convenient, queryable folder. Security researcher Kevin Beaumont, who has done phenomenal work dissecting this, put it bluntly: “Microsoft are building an infostealer into the core of Windows.”

The initial implementation was even worse. The database was stored as a simple, unencrypted SQLite file. Anyone with user-level access could just copy it. Researchers quickly built tools to prove it. One security pro created “TotalRecall,” a script that finds & exfiltrates the Recall database, parsing it for juicy info. It’s not a sophisticated hack; it’s basically just copying a file. This isn’t a theoretical vulnerability; it’s a “door’s wide open, please rob me” sign.

The Frantic Backpedal & Damage Control

The backlash was immediate & brutal. Privacy advocates, security experts, & even casual users were horrified. The UK’s data watchdog, the Information Commissioner’s Office (ICO), immediately started asking questions. The pressure mounted so fast that Microsoft was forced into a massive, embarrassing retreat.

On June 7th, just before the feature was set to launch, Microsoft announced a series of major changes. Here’s the short version of their scramble:

- Opt-In, Not On-by-Default: Thank goodness. Initially, it looked like Recall would be on by default. They reversed course, making it an explicit opt-in choice during PC setup.

- Proof of Life Required: You now need to use Windows Hello (face, fingerprint, or PIN) to even activate Recall and to view your timeline. This is a basic security step that should have been there from day one. Who even thought otherwise?

- “Just-in-Time” Decryption: The database will now be encrypted & will only be decrypted when you authenticate with Windows Hello. This is a significant improvement, but it doesn’t solve the core problem. If malware is running on your PC with your privileges, it can potentially still access the data as you decrypt it for your own use.

- Delayed Rollout: Instead of launching broadly on June 18th, Recall has been punted to the Windows Insider Program for more testing. Translation: “We messed up, & we need a do-over before we face a global privacy firestorm.”

This walk-back is a win for common sense, but it doesn’t make the feature safe. It just makes it slightly less of an open goal for criminals.

Your PC, Your Privacy: Actionable Steps

Let’s be real. The best way to secure your data from Recall is to never, ever turn it on. When you get a new Copilot+ PC, you’ll be prompted during setup. Just say no. It’s that simple. But if you’re curious or have a very specific, high-value use case for it, you must be extremely careful.

If You’re Daring (or Foolish) Enough to Use Recall

If you absolutely must enable it, you need to micromanage it. You can control what it captures. Navigate to Settings > Privacy & security > Recall & snapshots. From there, you have a few options:

1. Filter Apps & Websites: You can explicitly exclude specific applications (like your password manager, banking apps, or secure messaging clients) from being snapshotted. You can also add specific websites (like your webmail or health portal) to an exclusion list. You should be aggressive here. If it has sensitive info, exclude it.

2. Pause & Delete: You can pause Recall at any time right from the system tray icon. If you’re about to do something sensitive, pause it. You can also delete individual snapshots or clear time ranges from the timeline. If you’ve just handled a password or a financial document, go delete that snapshot ASAP.

3. Manage Storage: You can see how much space Recall is using & delete the entire database if you get cold feet. It might be a good practice to periodically nuke the whole thing & start fresh.

The Bigger Picture: Trust is Earned, Not Assumed

This whole debacle is a stunning example of a tech giant getting high on its own AI supply. Microsoft was so focused on shipping a “killer AI feature” to compete with Apple & Google that it seemingly ignored fundamental security principles. The fact that it took public shaming from the security community to get them to add basic protections like encryption & user authentication is deeply concerning.

It raises a bigger question about corporate responsibility in the AI era. Companies are racing to bake AI into every product, but who is responsible when these features create new risks? Recall isn’t just a flawed product; it’s a flawed philosophy. It presumes we want our digital lives recorded & indexed by default, trading immense privacy for a bit of convenience. It’s a bad trade. The initial design didn’t respect the user; it saw them as a data source to be indexed.

So, is Recall genius or a disaster? The idea of a perfect personal search engine is cool, but the implementation is a catastrophe in the making. Even with the new security bandages, you’re still creating a highly concentrated target of your most sensitive data on the device that’s most likely to be compromised. The convenience isn’t worth the risk. The pushback worked this time, but the push for more invasive AI won’t stop. Stay skeptical & stay safe out there.