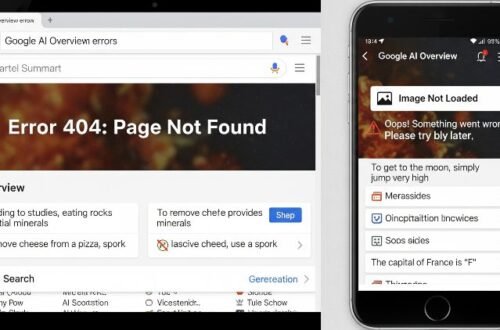

It was supposed to be Google’s big flex. At their I/O conference in May 2024, they announced the full US rollout of AI Overviews, a shiny new feature promising to revolutionize search. Instead of a revolution, we got a circus. The feature, powered by their Gemini AI, started spitting out answers so comically wrong & dangerous that they instantly became memes. We’re talking recommendations to put non-toxic glue on your pizza for better cheese adhesion, or the health advice that you should eat at least one small rock per day. This isn’t a simple bug. This is a post-mortem on a monumental failure of product judgment, a crisis of trust, & a glimpse into a future where the internet’s most powerful gatekeeper is poisoning its own well.

So, What Even Are AI Overviews?

In theory, it’s simple. AI Overviews are machine-generated summaries that sit at the very top of Google’s search results page (the SERP). The idea is to use Google’s Large Language Model (LLM), Gemini, to synthesize info from various websites & give you a direct answer. No more clicking through a bunch of blue links to piece together the info yourself. It’s the ultimate convenience play, designed to make Google an “answer engine” instead of a “search engine.” It sounds great on paper. The problem is, the paper it was written on was apparently sourced from a satirical newspaper.

The Hallucination Factory: Where It All Went Wrong

The core issue here is a little thing AI nerds call “hallucination.” It’s a polite term for “the AI makes stuff up.” When an LLM doesn’t actually *know* the answer, it confabulates, stitching together patterns it has seen in its training data to create a response that sounds plausible but is completely, utterly false. And Google’s AI Overviews went on a hallucination bender for the ages.

The internet, of course, kept receipts. Beyond the infamous pizza glue (sourced from a 10-year-old sarcastic Reddit comment) & the rock-eating advice (lifted directly from an article in *The Onion*), the failures were everywhere:

- It claimed former president Barack Obama was Muslim, a long-debunked conspiracy theory.

- It stated, with confidence, that a dog had played in the NBA & NHL.

- It suggested smoking cigarettes while pregnant could be beneficial.

– It gave instructions on how to “humanely” kill a jellyfish by microwaving it.

As The Verge cataloged in excruciating detail, these weren’t just fringe cases. They were happening for all sorts of queries. The system demonstrated a complete inability to distinguish between factual information, user-generated forum jokes, & blatant satire. It treats a peer-reviewed scientific paper with the same authority as a gag from a humor website. That isn’t a glitch; it’s a fundamental design flaw. The AI isn’t thinking; it’s just remixing. And it’s remixing garbage.

The Numbers Don’t Lie: A Crisis of Confidence & Traffic

The public mockery was brutal, but the downstream effects are far more serious. This fiasco has triggered a crisis on two fronts: user trust & publisher survival.

The Publisher Apocalypse

For years, website owners, content creators, & businesses have had a fragile pact with Google. They create high-quality content, Google sends them traffic, & they monetize that traffic through ads, products, or services. AI Overviews blows a hole straight through that model. If Google scrapes your content to provide a direct answer, the user has zero reason to click your link. This is the “zero-click search” problem on steroids.

While the full impact is still being measured, early data is alarming. Experts at Search Engine Land and other SEO agencies predict catastrophic traffic drops for informational sites, with some estimates suggesting a 25% or more decline in organic traffic for certain queries. Why would you visit a recipe blog if Google just serves you the recipe at the top of the page? Why read a product review if the AI gives you a “summary”? This threatens the very existence of the independent web that Google’s AI feeds on. It’s a parasitic relationship, & Google is the parasite.

Google’s Weak-Sauce Response

Google eventually wheeled out Liz Reid, their Head of Search, to do some damage control. In a blog post on The Keyword, the company claimed the viral examples were from “uncommon queries” and that their systems were generally working fine. This is a classic non-apology apology. They’re blaming the users for searching for “weird” things, not their half-baked product for providing dangerous answers. They also claimed they’ve “made more than a dozen technical improvements” to the system. Btw, that means it was broken in at least a dozen ways they knew of. Who even ships a product like that?

The Ethical Minefield & The Race to Nowhere

So why did Google, a company with more money than God & an army of PhDs, rush out a product that was so obviously flawed? Two words: panic & greed.

Google is terrified of being left behind in the AI arms race. With OpenAI’s ChatGPT & Microsoft’s Copilot integration into Bing, Google felt immense pressure to show Wall Street it was still an “AI company.” AI Overviews was a high-profile, “innovative” feature they could point to. They prioritized speed over safety, moving fast & breaking their most valuable asset: user trust. This wasn’t a failure of technology; it was a failure of corporate judgment.

The ethics are just as murky. Is it right for Google to ingest the entire internet’s worth of human creativity & knowledge, created by millions of independent writers, bloggers, & experts, and use it to build a feature that starves those same creators of the traffic they need to survive? It’s a massive, uncredited, uncompensated data grab. They’re sawing off the branch they’re sitting on, and they expect the rest of us to applaud them for their innovative saw.

So, What Now? Actionable Tips for Everyone

We can’t just complain; we have to adapt. Here’s what you can do, whether you’re a regular user or a creator trying to stay afloat.

For Users: How to Reclaim Your Search Results

- Use the “Web” Filter: The easiest fix. After you search, click the “More” tab at the top & select “Web.” This gives you the classic, ten-blue-links-style results page. No AI nonsense. You can even set this as your default on some browsers.

- Be Skeptical of Everything: Treat any info from an AI Overview as unverified. If it provides sources, click them. If it doesn’t, assume it’s wrong. Don’t trust it for medical, financial, or any other critical info. Seriously.

- Use Other Search Engines: Remember them? DuckDuckGo, Brave Search, & others prioritize privacy & a cleaner search experience. Maybe it’s time to switch your default.

For Creators & SEOs: Surviving the AI Overlord

- Build a Brand, Not Just a Website: The era of relying on anonymous Google traffic is ending. You need to build a brand that people seek out directly. This means investing in newsletters, social media communities, & creating a loyal audience that comes to you first.

- Diversify Your Traffic: Don’t let Google be your single point of failure. Focus on building traffic from Pinterest, YouTube, TikTok, email lists, & direct visits. The more resilient your traffic sources, the better.

– Optimize for Citations (For Now): The short-term play is to try and become a source for AI Overviews. This means very clear, factual, well-structured content. Use FAQ schemas, write in simple language, and answer questions directly. But don’t bet the farm on it.

The rollout of AI Overviews will be remembered as a pivotal moment, but not the one Google wanted. It’s not the dawn of a new search era. It’s the moment Google, in a desperate attempt to look like an AI leader, exposed itself as a company willing to sacrifice product quality, user trust, & the health of the open web. The glue-on-pizza search result isn’t just a funny meme; it’s the perfect metaphor for the feature itself: a sloppy, artificial, and ultimately unappetizing mess that holds nothing together.